My Experiments Stopped, What Happened?

Why Did My Convert Experiments Stop & How to Restart Them

| Author: | George F. Crewe |

THIS ARTICLE WILL HELP YOU:

Understand Why Experiments Stop

Convert Experiments is designed to encourage testing best practices. For example, many teams wait until a test reaches their chosen confidence threshold and has run long enough to collect meaningful data before drawing conclusions.

An experiment may appear to have “stopped” because its status changed from Active to Completed, because individual variations were paused automatically, or because a maximum runtime or visitor limit was reached.

Depending on your settings, an experiment can complete automatically when one of the following happens:

-

The maximum visitors limit for the experience is reached

-

The minimum running time and maximum running time conditions are both met

-

A variation winner is detected after the minimum running time is met

-

All losing variations are stopped and only the winning experience remains active

-

Planned sample size is reached and your automation settings allow the test to complete

Automations

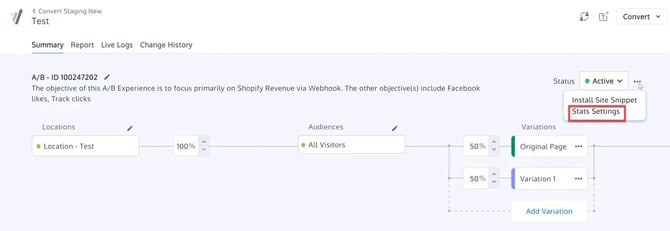

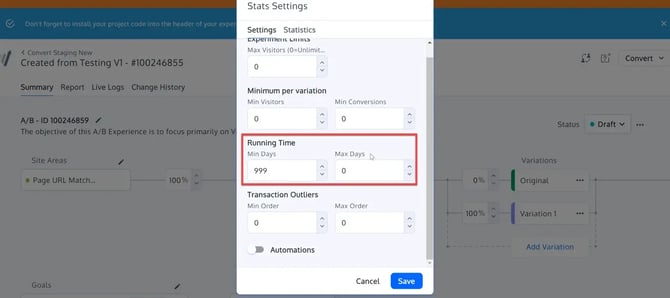

You can manage these settings from Stats & Settings in the Experience Summary.

Convert includes several automation options that can affect whether an experiment keeps running or completes automatically:

1) Keep Winning Variation Running

When the experiment is marked as completed, the winning variation can remain active and continue receiving traffic.

2) Stop Losing Variations

When a variation is identified as performing worse than the others with sufficient confidence, it can be stopped automatically and its traffic redistributed across the remaining active variations.

3) Keep Running Until Confidence

This automation helps prevent confusion when a fixed-horizon frequentist test reaches its planned sample size but has not yet reached the confidence threshold.

When this setting is enabled:

-

The experiment does not auto-complete just because progress reaches 100%

-

The experiment keeps running until the planned sample size is reached and the configured confidence threshold is met

-

If confidence has not been reached yet, the experiment continues collecting data until significance is achieved or you stop it manually

When this setting is disabled:

-

The experiment can complete automatically once the planned sample size is reached, even if the confidence threshold has not yet been met

Important Notes

-

Keep Running Until Confidence is available at both the Project level and the Experiment level

-

When Automations are turned on, this option is intended to be enabled by default

-

This setting applies to fixed-horizon Frequentist experiments

-

It does not affect Sequential or Bayesian methodologies

-

Existing automation options such as Keep Winning Variation Running and Stop Losing Variations continue to work as expected

Why an Experiment May Complete Earlier Than Expected

If your experiment completed and you did not expect it to, review the following:

-

Was a maximum visitors limit reached?

-

Was a maximum running time configured?

-

Did a variation already meet your confidence threshold?

-

Was Stop Losing Variations enabled, causing losing variations to be paused automatically?

-

Was Keep Running Until Confidence disabled, allowing the experiment to complete once planned sample size was reached?

If you want your fixed-horizon frequentist experiment to continue beyond 100% progress until the confidence threshold is reached, make sure Keep Running Until Confidence is enabled.

Restarting Your Experiment

Before restarting an experiment, review your Automation Settings first. Otherwise, the same conditions may cause it to complete again.

To restart your experiment:

-

Go to the Experience Summary

-

In the Status section, click the Completed dropdown

-

Change the status back to Active

-

Click Activate Experience at the bottom right of the page

Best Practice

Before launching or restarting an experiment, review:

-

minimum and maximum running time

-

maximum visitors

-

confidence threshold

-

whether losing variations should stop automatically

-

whether the experiment should keep running until confidence is reached

These settings help make experiment behavior more predictable and better aligned with your testing goals.